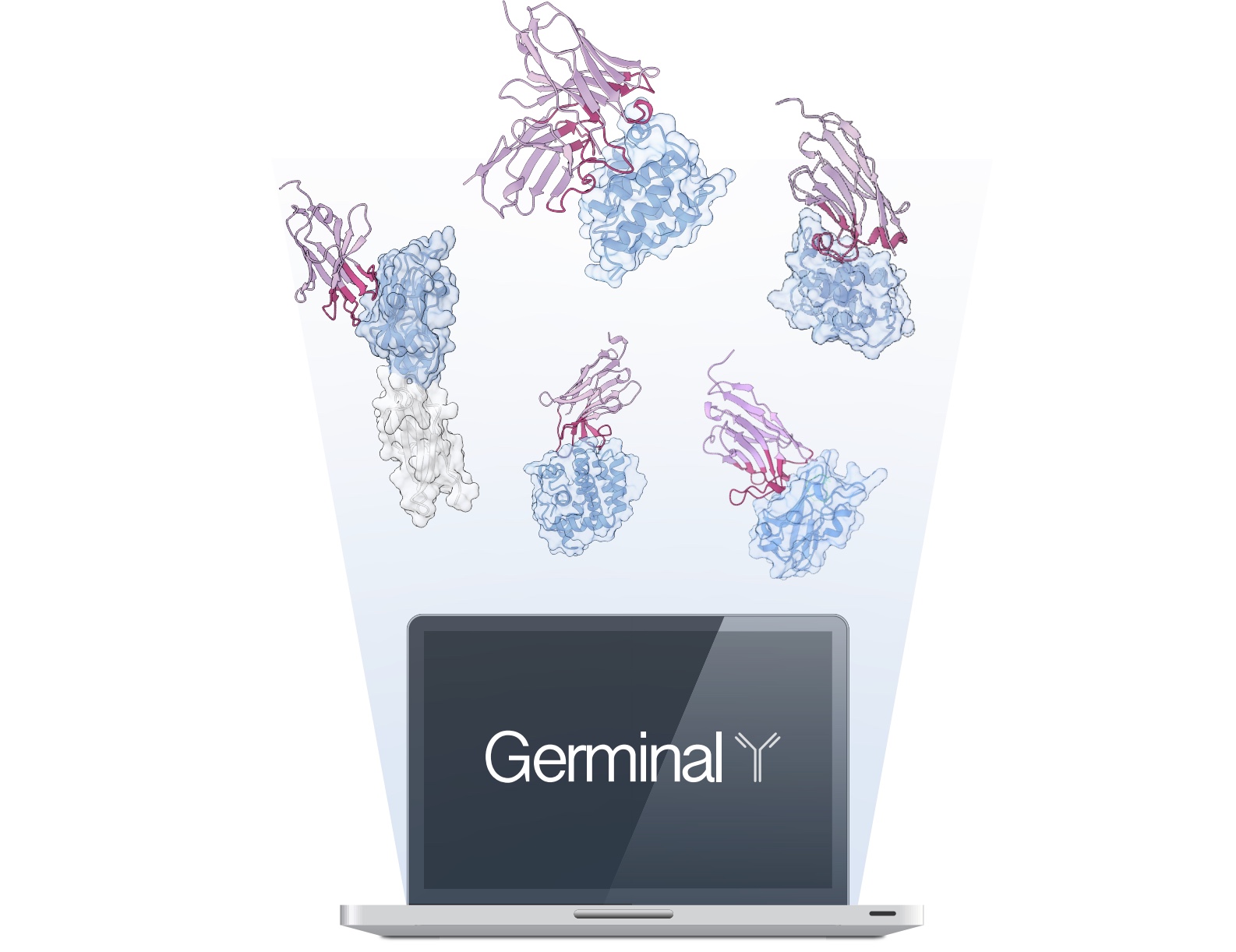

Building “Germinal” for AI-Designed Antibody Molecules

A conversation with Brian Hie, Xiaojing Gao, and Santiago Mille Fragoso

For decades, creating custom antibodies has required either injecting animals with target proteins and hoping for useful immune responses, or screening millions of random sequences until something worked. Now, a collaboration between Stanford University and Arc Institute has developed "Germinal," an AI system that designs functional nanobodies with unprecedented efficiency. The work appears in a preprint posted September 24 on bioRxiv.

Germinal centers on solving a fundamental challenge in computational antibody design: how to create molecules that are both binding the intended targets and biologically realistic. Previous approaches using structure prediction alone produced rigid, unnatural interfaces, while sequence-based methods lacked the structural precision needed for specific binding.

The research team's solution combines two complementary AI systems. AlphaFold-Multimer provides structural predictions for stable protein-protein interactions, while IgLM, an antibody-specific language model, ensures the sequences resemble real antibodies. The researchers reconciled the two programs by developing algorithms that find where the systems agree, building on earlier work like BindCraft that showed how to use sequence-to-structure AI prediction models for binder design. They also added custom rules that force the designs to behave like real antibodies with working binding sites.

Testing against four protein targets, Germinal achieved success rates of 4-22% while requiring only dozens of experimental validations instead of the thousands typically needed. The team's best nanobody binds its target with 140 nanomolar affinity, approaching therapeutic relevance. Importantly, they confirmed through mutagenesis experiments that their designed nanobodies bind at an intended epitope.

The approach makes custom antibody development accessible to labs without specialized screening facilities. The team has also made their methods open source, anticipating rapid adoption and improvement by the research community.

In this technical discussion, three of the co-authors explore the computational innovations behind Germinal, its current capabilities and limitations, and the implications for both basic research and therapeutic development. The conversation includes:

- Brian Hie (X: @BrianHie), an Assistant Professor of Chemical Engineering at Stanford University, the Dieter Schwarz Foundation Stanford Data Science Faculty Fellow, and Arc Institute Innovation Investigator in Residence;

- Xiaojing Gao (X: @SynBioGaoLab), an Assistant Professor of Chemical Engineering at Stanford University;

- Santiago Mille Fragoso (X: santimillef), a PhD student in Gao's lab and first author.

What specific algorithmic innovations made this work beyond just combining structural and sequence models?

Brian Hie: A critical advance was developing algorithms that reconcile AlphaFold's structural predictions with IgLM's sequence preferences through back propagation. We essentially tell AlphaFold "I want this binding structure, what sequences do you suggest?" then iterate on the best outcomes.

Xiaojing Gao: The devil is in the details: we also added antibody-specific loss functions. We enforce that binding occurs through CDR loops rather than framework regions, since framework binding indicates non-specific interactions. We also minimize secondary structures at the binding interface, since CDRs should be flexible loops. This builds on BindCraft's approach but adds the language model component and antibody-specific constraints nobody else was using.

Many groups have tried inverting AlphaFold for protein design. What made your approach work specifically for antibodies?

Santiago Mille Fragoso: AlphaFold is very good at predicting secondary structures, but antibody CDRs, the regions that bind antigens, are loopy and flexible rather than structured like beta sheets or alpha helices. By combining AlphaFold with the language model that biases designs toward realistic CDR sequences, we get designs that are both structurally confident and which sequences look like real nanobodies. Our antibody-specific loss functions optimize for CDR binding rather than framework binding, which provides the specificity that makes antibodies work.

How do your computational requirements compare to other AI-driven approaches?

Santiago Mille Fragoso: We are somewhat comparable to other methods. We need a GPU with at least 40 gigabytes of VRAM, which is available in Google Colab, and about a week of runtime per target to get a binder like the ones reported in the preprint. The difference is that other methods (RFdiffusion antibody) require millions of designs while we need hundreds, but we're slower per design because we back-propagate through both AlphaFold and the language model.

How does your experimental validation differ from traditional antibody characterization?

Xiaojing Gao: We developed a democratized two-stage pipeline. First, a luciferase-based assay where nanobodies fused to split luciferase produce light when binding occurs, which could narrow hundreds of candidates to tens. Only promising hits move to more laborious and expensive Octet measurements for binding kinetics, followed by alanine scanning mutagenesis to confirm epitope-specific binding.

Your success rates varied across targets. What determines success for different proteins?

Santiago Mille Fragoso: We saw 4-22% success rates across PD-L1, IL3, IL20, and BHRF1. We don't yet fully understand what makes some targets more amenable, but we also didn't test much. We suspect it relates to the binding site's structural and biochemical properties, but establishing clear design rules needs more work.

How do your binding affinities compare to therapeutic requirements?

Xiaojing Gao: We consistently achieved submicromolar affinities–our best reached 140 nanomolar. We're in the therapeutically relevant range but not yet matching the tightest traditional binders. Our current version needs complementary optimization approaches, but we're getting binding strengths usable for basic research and within striking distance for therapeutics.

Brian Hie: Traditional methods sometimes have one or two really tight binders, but our approach provides more consistent results across the tested range.

What types of applications do you see as most promising?

Brian Hie: For therapeutics, the advantage is incorporating specific design criteria. Unlike animal immunization where you get what you get, computational design lets you target specific epitopes. This is crucial for infectious diseases where you want to bind regions of pathogens that are less likely to mutate. You can also optimize for thermal stability, reduced immunogenicity, or other therapeutic properties.

Xiaojing Gao: For basic research, this quantitatively transforms antibody access. Previously, custom antibody projects required weighing months of work against uncertain outcomes. Now that barrier is essentially gone for many applications.

What are the current limitations?

Santiago Mille Fragoso: The main challenges are binding affinity and computational requirements. We're in the hundreds of nanomolar range rather than the tens of nanomolar ideal for some therapeutics. Right now, this speeds up initial discovery, but additional optimization is needed for clinic-ready therapeutics.

Xiaojing Gao: Also, we've only demonstrated single-domain nanobodies. Most therapeutic antibodies are two-domain FABs or scFvs, which is our next extension target.

Why did you make this open source?

Xiaojing Gao: Once people know this can be done, there's no real technical barrier to reproduction. We saw this with AlphaFold 3. People created replicates before the official release. It's better to give this to the community for collective advancement rather than trying to maintain artificial barriers. My first obligation is to academics, and this approach maximizes scientific progress.

How did this cross-institutional collaboration come together?

Santiago Mille Fragoso: I was working on protein sensors and realized there was a gap in available binding biomolecules. I had already been in touch with Brian after his talk at the Stanford Synthetic Biology Seminar and knew about this work using language models for antibody engineering. The project was very student-driven. We had a lean, scrappy, super motivated and interdisciplinary team where removing any one person would have caused failure. It was very fun to work with all of them.

What was it like when you first saw the results working?

Brian Hie: I was standing in the lab at Arc around 6-7 PM when the first Octet results came in—we clapped. I saw the first hit live and started shouting, asking whether it was a positive control or actual design. It was a design.

Santiago Mille Fragoso: What made it exciting was we'd had a previous round that completely failed. Designs didn't even express. After seeing nothing in that first attempt, seeing actual binding was incredible. The computational model made sense to us, so we kept pushing despite initial failure.

Any advice for academic groups taking on industry-dominated problems?

Xiaojing Gao: I'd lean in on academia's strongest advantages: assemble an adventurous team with diverse, complementary expertise, be open and collaborative with colleagues, and be a little crazy and unafraid to fail occasionally.

Brian Hie: The Arc Institute collaboration and support was crucial. It provided the conceptual freedom and resources needed for this kind of ambitious, interdisciplinary research. Without that support structure, this wouldn't have been possible.